In 2021, Zillow shut down its algorithmic home-buying division after a pricing model misjudged property values so badly that the company wrote off $304 million and laid off a quarter of its workforce. A year earlier, the model had been celebrated as a competitive advantage. The lesson was expensive but clear: when models fail, the consequences are not hypothetical. They are financial, reputational, and sometimes existential.

Model risk management is the discipline built to prevent exactly these failures. Born in banking, shaped by regulation, and now essential for any organisation that deploys AI or machine learning, it offers a structured approach to ensuring that the models you trust with decisions actually deserve that trust.

What You Will Learn

· What model risk management means in practical terms, and where the concept originated

· Why model failures cost billions, with real-world examples that illustrate the stakes

· The regulatory frameworks (SR 11-7, PRA SS1/23, EU AI Act) that now mandate model governance

· The four pillars of an effective model risk management framework

· How explainability and interpretability reduce model risk

· A practical roadmap for AI teams to start building model governance today

What Is Model Risk Management?

At its core, model risk management (MRM) is the practice of identifying, assessing, mitigating, and monitoring the risks that arise whenever a quantitative model is used to inform decisions. A "model" in this context is any system that takes inputs, applies assumptions or algorithms, and produces outputs that influence actions: credit scores, fraud detectors, pricing engines, demand forecasters, recommendation systems, and increasingly, large language models.

The concept was formalised in 2011 when the U.S. Federal Reserve and the Office of the Comptroller of the Currency issued SR 11-7, the Supervisory Guidance on Model Risk Management. That guidance established a principle that has only grown more relevant: model risk should be treated with the same seriousness as credit risk, market risk, or operational risk. Models are not neutral tools. They encode assumptions, reflect data limitations, and can amplify errors at scale.

The OCC Comptroller's Handbook on Model Risk Management defines model risk as arising from two sources: fundamental errors in the model itself (wrong assumptions, flawed data, coding mistakes) and the misuse or misapplication of a model beyond its intended scope. Both sources matter, and both require governance.

---

For decades, MRM was primarily a banking concern. But the explosion of AI and machine learning across every industry has changed the calculus entirely. If your organisation uses a model to make or influence decisions, you have model risk, whether you manage it or not.

Why Model Risk Cannot Be Ignored

Model failures are not rare edge cases. They are a recurring pattern across industries, and their consequences are severe.

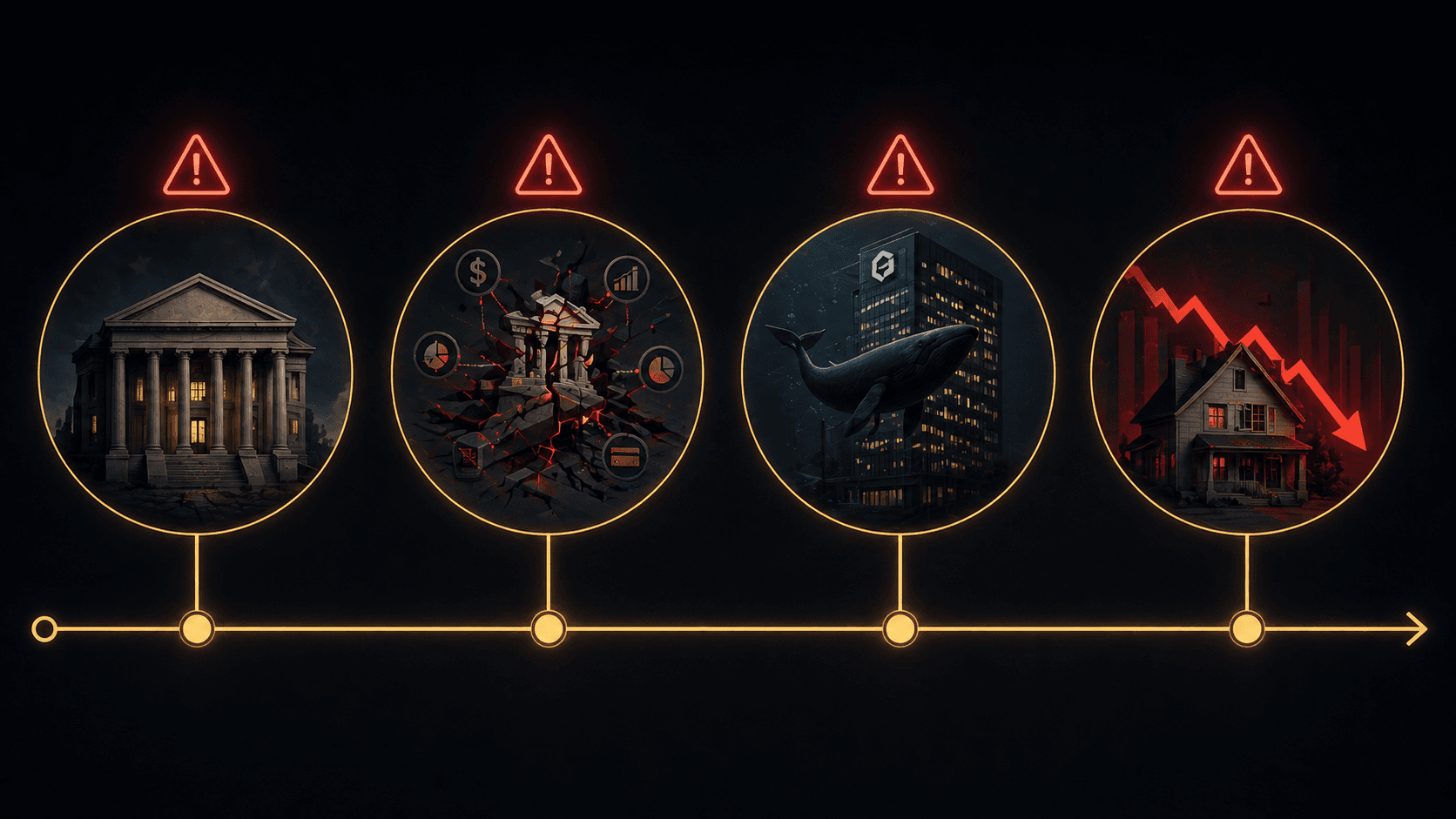

Real-World Failures and Their Costs

Long-Term Capital Management (1997) lost approximately $4.5 billion after its trading models, built on assumptions of normally distributed returns, failed to account for extreme market events. The fund's collapse required a Federal Reserve-coordinated bailout to prevent broader systemic damage.

The 2008 financial crisis was amplified by the financial industry's over-reliance on the Gaussian copula model for pricing collateralised debt obligations. The model systematically underestimated the probability of correlated defaults, contributing to trillions in losses worldwide.

JPMorgan's "London Whale" incident (2012) resulted in $6 billion in trading losses and nearly $1 billion in regulatory fines. A contributing factor was a spreadsheet error in the bank's Value-at-Risk model that understated the true risk exposure.

Zillow Offers (2021) suffered a $304 million inventory write-down when its home price prediction model consistently overvalued properties. The division was shut down entirely, and approximately 2,000 employees lost their jobs.

These are not obscure technical failures. They are strategic disasters rooted in a common problem: organisations trusted models without adequate frameworks to challenge, validate, and monitor them. As AI models become more complex and more deeply embedded in decision-making, the surface area for model risk only grows. Research suggests that 91% of machine learning models experience performance drift within several years of deployment, making ongoing oversight essential.

The Regulatory Landscape: From Banking Rules to Broad AI Governance

Model risk management is no longer a voluntary best practice. Regulators across the globe are converging on the principle that organisations deploying models, especially AI models, must demonstrate structured governance. Understanding this regulatory landscape is important because it signals the direction of travel for all industries, not just banking.

United States: SR 11-7 and the Foundation

The Federal Reserve's SR 11-7 remains the foundational document for model risk management in U.S. banking. It established three pillars that still define the discipline: robust model development and implementation, effective model validation, and sound governance with clear policies and controls. While it was written before the current AI boom, its principles are technology-agnostic and apply directly to machine learning systems.

United Kingdom: PRA SS1/23

In May 2023, the Bank of England's Prudential Regulation Authority issued SS1/23, the first dedicated supervisory statement on model risk management. Its five principles came into force in May 2024 and cover the entire model lifecycle: from defining what constitutes a model, to board-level accountability for model risk appetite. The PRA held roundtable sessions in October 2025 specifically to discuss how AI and machine learning technologies fit within these principles.

European Union: The AI Act and Article 9

The EU AI Act takes model risk management beyond financial services entirely. Article 9 mandates that providers of high-risk AI systems establish a continuous, iterative risk management system throughout the AI lifecycle. This includes identifying foreseeable risks, estimating their severity, implementing mitigation measures, and testing to verify that residual risk is acceptable. For any AI team building systems that affect people's access to credit, employment, education, or essential services, this is not optional. If you want to understand the full scope of the EU AI Act and its enforcement timeline, our detailed analysis of the EU AI Act in 2026 covers it comprehensively.

The NIST AI Risk Management Framework

For organisations outside regulated financial services, the NIST AI Risk Management Framework (AI RMF 1.0) offers a voluntary, sector-agnostic framework built around four functions: Govern, Map, Measure, and Manage. While not legally binding, it provides a structured starting point for AI teams that want to build governance before regulation forces them to.

The pattern across all of these frameworks is unmistakable: regulators expect organisations to know what models they are running, to validate those models independently, to monitor them continuously, and to hold leadership accountable for model risk. The days of deploying a model and forgetting about it are over.

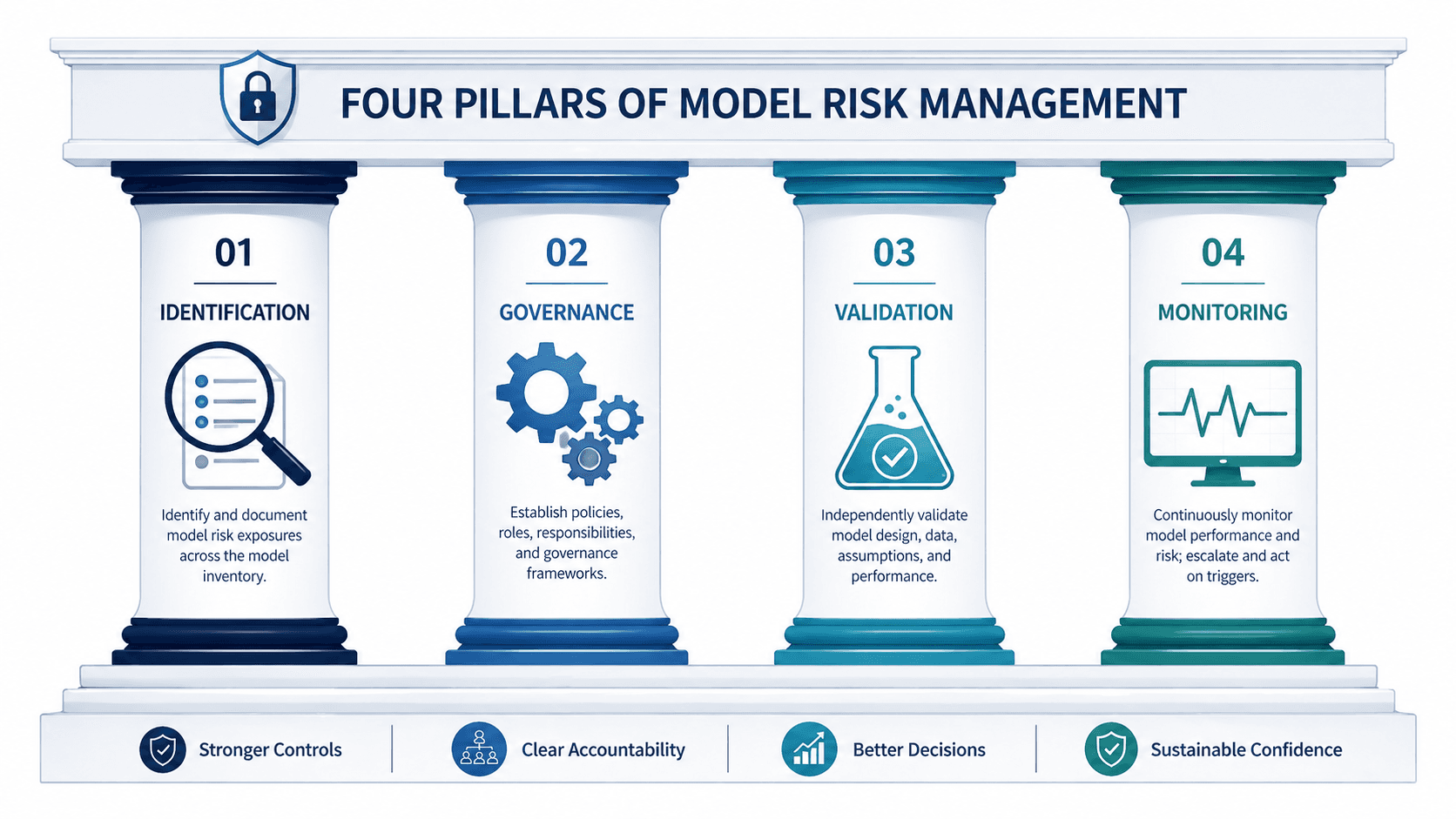

The Four Pillars of a Model Risk Management Framework

Whether you are building a framework from scratch or adapting an existing one for AI and machine learning, effective model risk management rests on four pillars. These pillars reflect the consensus across SR 11-7, PRA SS1/23, the Cloud Security Alliance's AI Model Risk Management Framework, and leading industry practice.

Pillar 1: Model Identification and Inventory

You cannot manage risk in models you do not know about. The first pillar is establishing a comprehensive model inventory: a central register of every model in use across the organisation, along with metadata such as its purpose, owner, risk rating, data inputs, and deployment status. This sounds straightforward, but in practice it is one of the hardest steps. Research from Yields.io highlights that effective MRM in 2026 starts with visibility. Shadow models, including spreadsheet-based calculations and ad hoc scripts that quietly influence decisions, are among the most common sources of unmanaged model risk.

Pillar 2: Model Governance

Governance defines who is responsible for model risk and how decisions about models are made. This includes clear ownership at both the individual model level and the enterprise level, a defined model risk appetite approved by senior leadership, policies covering model development standards, approval workflows, and acceptable use, and a "three lines of defence" structure where model developers, independent validators, and internal audit each play distinct roles. The PRA's SS1/23 is explicit that boards must be involved in setting model risk appetite and understanding the aggregate model risk exposure across the business.

Pillar 3: Model Validation

Validation is the independent assessment of whether a model is fit for purpose. It goes beyond checking whether the code runs correctly. Effective validation includes conceptual soundness review (are the model's assumptions appropriate?), backtesting against historical data, benchmarking against alternative approaches, sensitivity analysis to understand how outputs change with inputs, and stress testing under extreme scenarios. For AI and machine learning models, validation must also address data representativeness, feature stability, fairness across demographic groups, and the model's behaviour on out-of-distribution inputs. Our exploration of interpretable versus black-box models discusses how model architecture choices directly affect how thoroughly a model can be validated.

Pillar 4: Model Monitoring and Reporting

Deploying a model is not the end of the process; it is the beginning of a new risk phase. Continuous monitoring tracks model performance in production, detects drift (when a model's accuracy degrades because the real world has changed), and flags anomalies before they cause damage. Monitoring should include automated alerts when key performance metrics cross predefined thresholds, regular reviews of input data quality and distribution shifts, periodic re-validation on a schedule proportional to the model's risk rating, and clear escalation paths when a model is underperforming. The AI model risk management market, now valued at over $7 billion, is growing rapidly precisely because organisations are realising that post-deployment monitoring cannot be done manually at scale.

Where Explainability Fits In: The Risk-Reducing Power of Interpretability

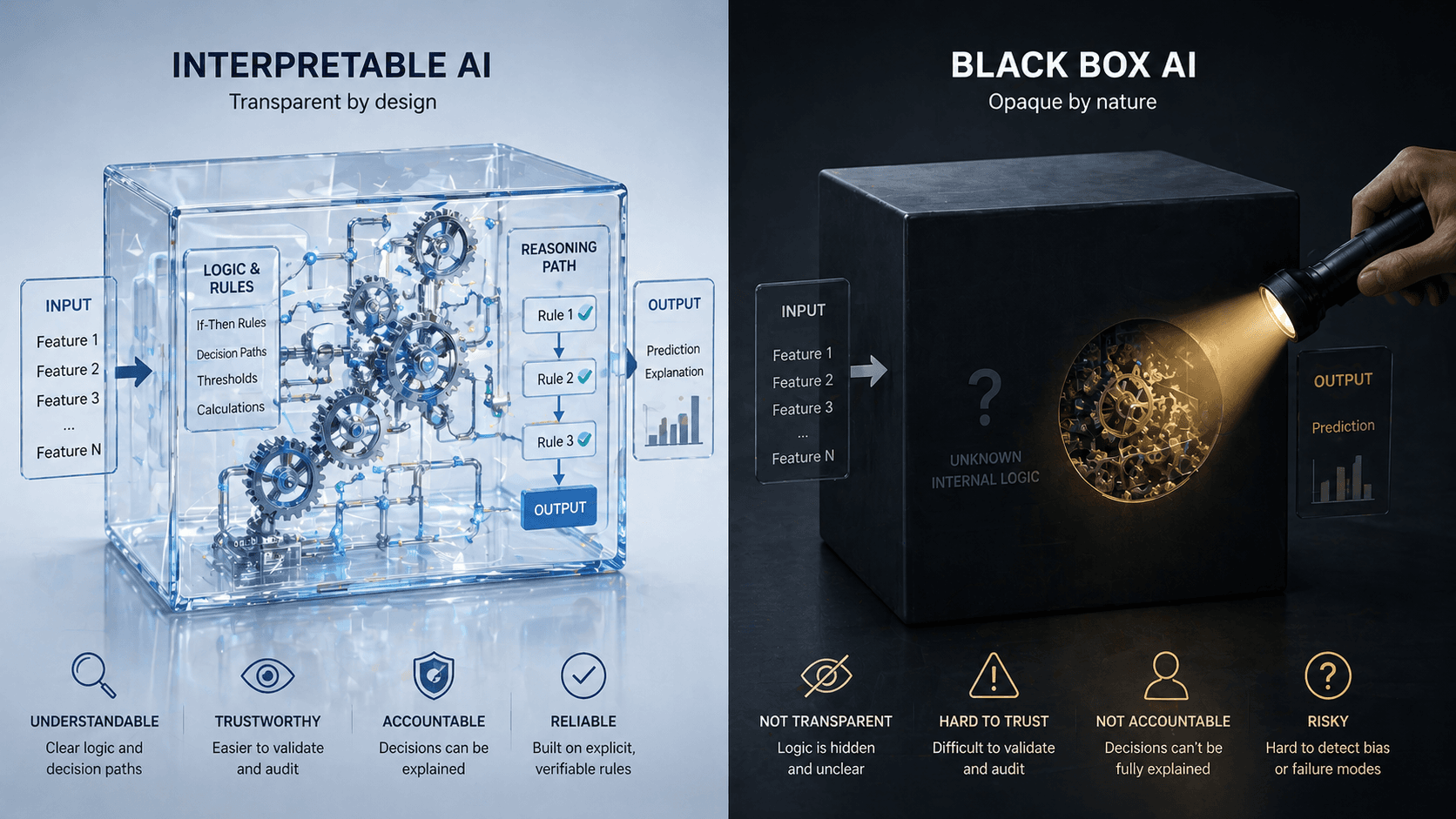

There is a direct and powerful connection between model risk management and explainable AI. A model you can explain is a model you can challenge, validate, and trust. A model you cannot explain is a model where risks hide in the dark.

Interpretable models, such as logistic regression, decision trees, scorecards, and Explainable Boosting Machines, allow validators to inspect the relationship between inputs and outputs directly. When a model's logic is transparent, errors in assumptions, data issues, or unexpected behaviour become visible during validation rather than surfacing only after deployment. Our deep dive into why explainable AI matters explores this dynamic in detail.

Post-hoc explanation methods like SHAP and LIME can help make black-box models more scrutable, but they add a layer of approximation rather than providing true transparency. From a model risk perspective, a model that is inherently interpretable will always be easier to govern than one that requires secondary tools to explain its behaviour.

This does not mean that complex models should never be used. It means that model risk management frameworks should account for the additional governance burden that opaque models create. When a model's complexity is justified by significant performance gains in a well-defined use case, the framework should compensate with stronger validation, more frequent monitoring, and more rigorous documentation. When the performance difference is marginal, the interpretable model is almost always the better risk-adjusted choice.

What This Means for AI Teams Outside Finance

If you work in technology, healthcare, insurance, human resources, or any field that deploys machine learning, the principles of model risk management apply to you, even if your industry does not yet have its own SR 11-7.

Consider the parallels. A healthcare algorithm that predicts patient risk scores affects treatment decisions. A hiring model that ranks candidates affects livelihoods. A content recommendation system that prioritises engagement affects public discourse. Each of these systems carries model risk: the potential for harm arising from errors, biases, or misapplications of the model.

The EU AI Act makes this explicit by extending risk management requirements to high-risk AI systems across sectors, including employment, education, law enforcement, and essential services. But even without regulation, the business case is compelling. Organisations that govern their models well avoid costly failures, build trust with users and regulators, and create more resilient systems.

The banking sector has spent fifteen years refining model risk management practices. AI teams in other industries do not need to start from zero. They can adapt the proven frameworks, scaled to fit their organisational size and risk profile, and build governance that is proportionate, practical, and effective. Understanding foundational concepts like structured versus unstructured data and how information flows through organisations is also part of the foundation, because model risk often traces back to data quality issues.

Getting Started: A Practical Roadmap for AI Teams

Building a model risk management capability does not require a massive budget or a dedicated team of fifty specialists. It requires intentionality and a willingness to treat models as assets that need ongoing care. Here is a practical starting point:

1. Define what counts as a model. Start broad. Any system that takes inputs, applies logic or learned parameters, and produces outputs that inform decisions qualifies. Include spreadsheet models, rule-based systems, and ML pipelines.

2. Build a model inventory. Document every model in production: its purpose, owner, data sources, last validation date, and risk tier. A simple spreadsheet is a valid starting point.

3. Assign clear ownership. Every model should have a named owner responsible for its performance, an independent reviewer for validation, and an escalation path for issues.

4. Establish a validation cadence. High-risk models should be validated before deployment and re-validated at least annually. Lower-risk models can follow a lighter schedule. The key is that no model runs indefinitely without scrutiny.

5. Implement basic monitoring. Track key performance metrics over time. Set thresholds for acceptable drift and build alerting when those thresholds are breached. Even simple statistical tests for input distribution shifts can catch problems early.

6. Document everything. Model documentation should cover the model's purpose and limitations, the data it was trained on, its known weaknesses, and the conditions under which it should not be used. The NIST AI RMF provides a useful structure for this documentation.

7. Start small, iterate. You do not need to build a full enterprise MRM programme on day one. Start with your highest-risk models, prove the value of governance, and expand from there.

Conclusion: Governance Is Not Bureaucracy, It Is Risk Intelligence

Model risk management is not about creating paperwork or slowing down innovation. It is about building the organisational capability to trust your models, because you have tested them, monitored them, and prepared for the possibility that they are wrong.

The organisations that do this well do not just avoid disasters. They build better models, make better decisions, and earn the trust of regulators, customers, and partners. In a world where AI models increasingly shape outcomes that affect people's finances, health, and opportunities, that trust is not a luxury. It is a competitive advantage and a moral responsibility.

Whether you are a data scientist, an AI engineer, a product manager, or a risk professional, model risk management is now part of your domain. The question is not whether your organisation has model risk. The question is whether you are managing it.

Continue Reading

· Interpretable vs Black-Box Models: The Trade-Off That Defines Modern AI

· Why Explainable AI Matters More Than You Think

· The EU AI Act in 2026: What It Actually Means for Business and Innovation