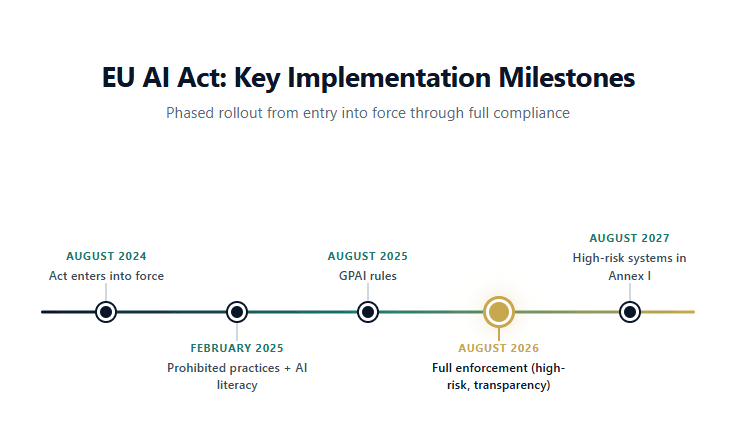

On 2 August 2026, the most significant AI regulation in the world reaches its main enforcement date. The EU AI Act will apply in full across all 27 member states, bringing binding obligations for anyone building, deploying, or importing AI systems into the European market. For financial services, healthcare, education, and employment, the rules are especially consequential.

Four months from now, organisations that have not classified their AI systems, documented their risk management processes, or trained their staff on AI literacy could face penalties of up to 35 million euros or 7% of global annual turnover. That is not a distant policy discussion. It is an operational deadline.

Yet much of the commentary around the EU AI Act remains abstract: risk tiers, legal definitions, compliance checklists stripped of context. This post takes a different approach. We will walk through what the Act actually requires, who it affects, how it interacts with existing regulations like GDPR and DORA, and what it means specifically for organisations in financial services. If you build, buy, or use AI in Europe, this is the article to read before August.

What You Will Learn

· How the EU AI Act is structured and what changes on 2 August 2026

· Which AI systems are classified as high-risk, with a focus on financial services

· The obligation you may already be breaking: Article 4 and AI literacy

· How the AI Act layers onto GDPR and DORA to create a regulatory stack

· The innovation debate: what startups and SMEs are concerned about, and why the picture is more nuanced than it appears

How the EU AI Act Works: A Risk-Based Framework

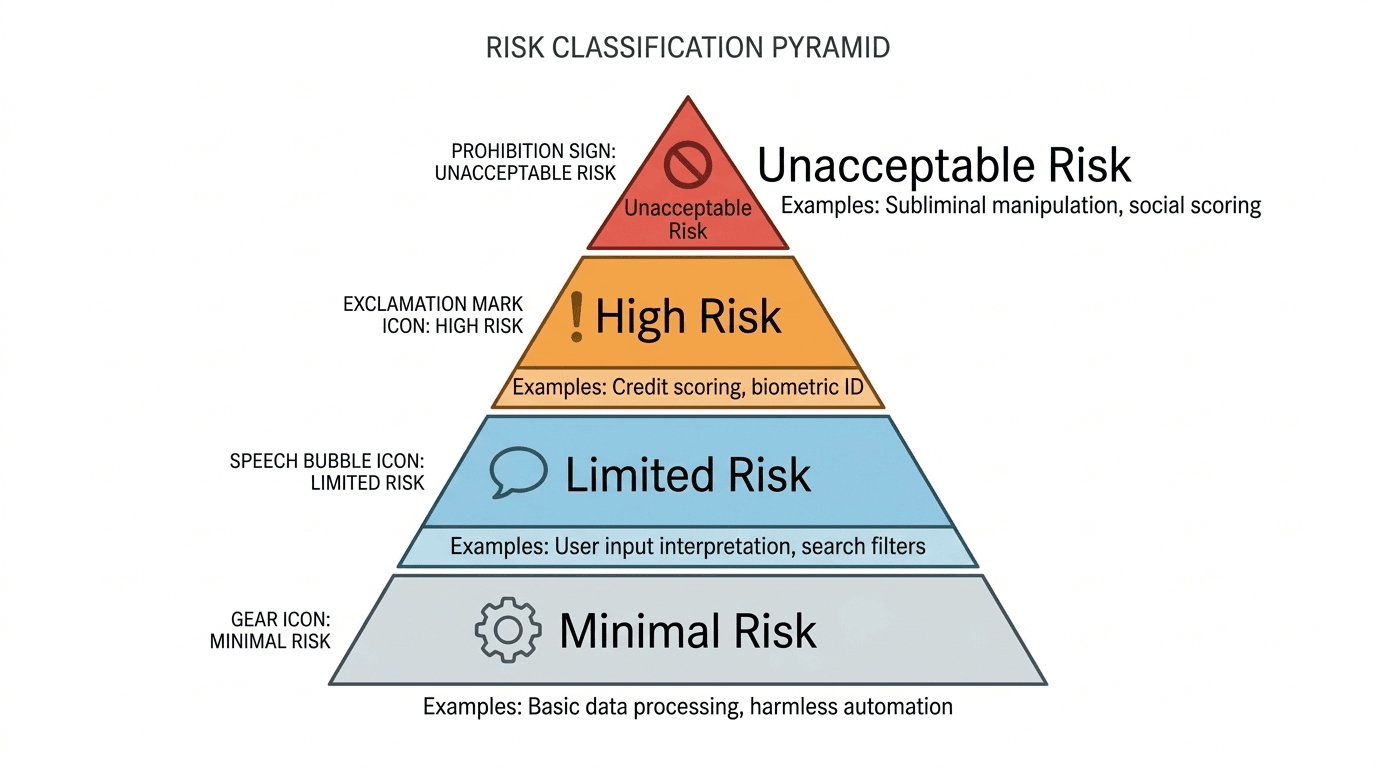

The EU AI Act takes a risk-based approach to regulating artificial intelligence. Rather than applying the same rules to every AI system, it classifies systems into four tiers based on the level of risk they pose to health, safety, and fundamental rights.

The Four Risk Tiers

Unacceptable risk: AI practices that are outright prohibited. These include social scoring by governments, real-time biometric identification in public spaces (with narrow law enforcement exceptions), manipulation of vulnerable groups, and emotion recognition in workplaces and schools. These prohibitions have been enforceable since February 2025.

High risk: AI systems used in sensitive domains, including credit scoring, recruitment, medical devices, critical infrastructure, and law enforcement. These carry the heaviest compliance obligations: risk management systems, technical documentation, human oversight, data governance, accuracy and robustness requirements, and registration in an EU-wide database. Full obligations apply from 2 August 2026.

Limited risk: Systems with specific transparency obligations. Under Article 50, chatbots must disclose that users are interacting with AI, emotion recognition systems must notify users, and deepfake content must carry machine-readable watermarks. These rules also take effect in August 2026.

Minimal risk: The vast majority of AI systems (spam filters, inventory optimisers, recommendation engines) face no specific obligations beyond voluntary codes of conduct.

What the AI Act Means for Financial Services

Financial services is one of the sectors most directly affected by the EU AI Act. Credit scoring, loan approval, fraud detection, and AML risk profiling are all explicitly classified as high-risk AI use cases under Annex III of the Act. For banks, insurers, and fintechs operating in Europe, this means every model that influences access to financial products will need to meet the Act's full compliance requirements.

The European Banking Authority published its analysis of the Act's implications for banking in November 2025. The EBA found that while the AI Act's requirements complement existing obligations under the Capital Requirements Directive (CRD), the Capital Requirements Regulation (CRR), PSD2, and DORA, there are significant ambiguities. The most pressing: what exactly constitutes an "AI system" under the Act, and when does a bank cross the line from deployer to provider?

The Provider vs Deployer Distinction

This distinction matters enormously in financial services. A provider is the entity that develops or places an AI system on the market. A deployer is the entity that uses it. Providers bear the heaviest obligations: conformity assessments, technical documentation, post-market monitoring.

But as the Harvard Data Science Review has detailed, banks rarely use AI models off the shelf. They customise, retrain, and fine-tune vendor models on proprietary data. At what point does a deployer become a provider? The answer determines which compliance obligations apply, and getting it wrong could mean either over-investing in unnecessary processes or facing regulatory action for under-compliance.

For organisations already navigating model risk management frameworks under Basel and EBA guidelines, the AI Act adds another layer. But it is not entirely new ground. As we explored in our post on why explainable AI matters, the demand for transparency and auditability in financial models has deep regulatory roots. The AI Act formalises and extends those expectations to all AI systems, not just those under traditional banking supervision.

The Obligation You May Already Be Breaking: Article 4 and AI Literacy

While most attention has focused on high-risk system requirements, one provision has already taken effect and applies to nearly every organisation in Europe. Article 4 of the EU AI Act requires that providers and deployers of AI systems "take measures to ensure, to their best extent, a sufficient level of AI literacy of their staff and other persons dealing with the operation and use of AI systems on their behalf."

This obligation has been legally binding since 2 February 2025. It makes no distinction by sector, company size, or type of AI used. If your organisation uses AI systems of any kind, you are expected to demonstrate that relevant staff have been trained. National enforcement begins in August 2026, with penalties reaching up to 15 million euros.

What counts as "sufficient" AI literacy? The Act says it must reflect each person's "technical knowledge, experience, education and training and the context the AI systems are to be used in." In practice, this means role-specific training: a compliance officer reviewing credit model outputs needs different training than a marketing analyst using an AI-powered customer segmentation tool.

For organisations that have not yet started, the time to act is now. Document your training programmes, keep attendance records, and produce compliance reports. The burden of proof lies with the organisation, not the regulator.

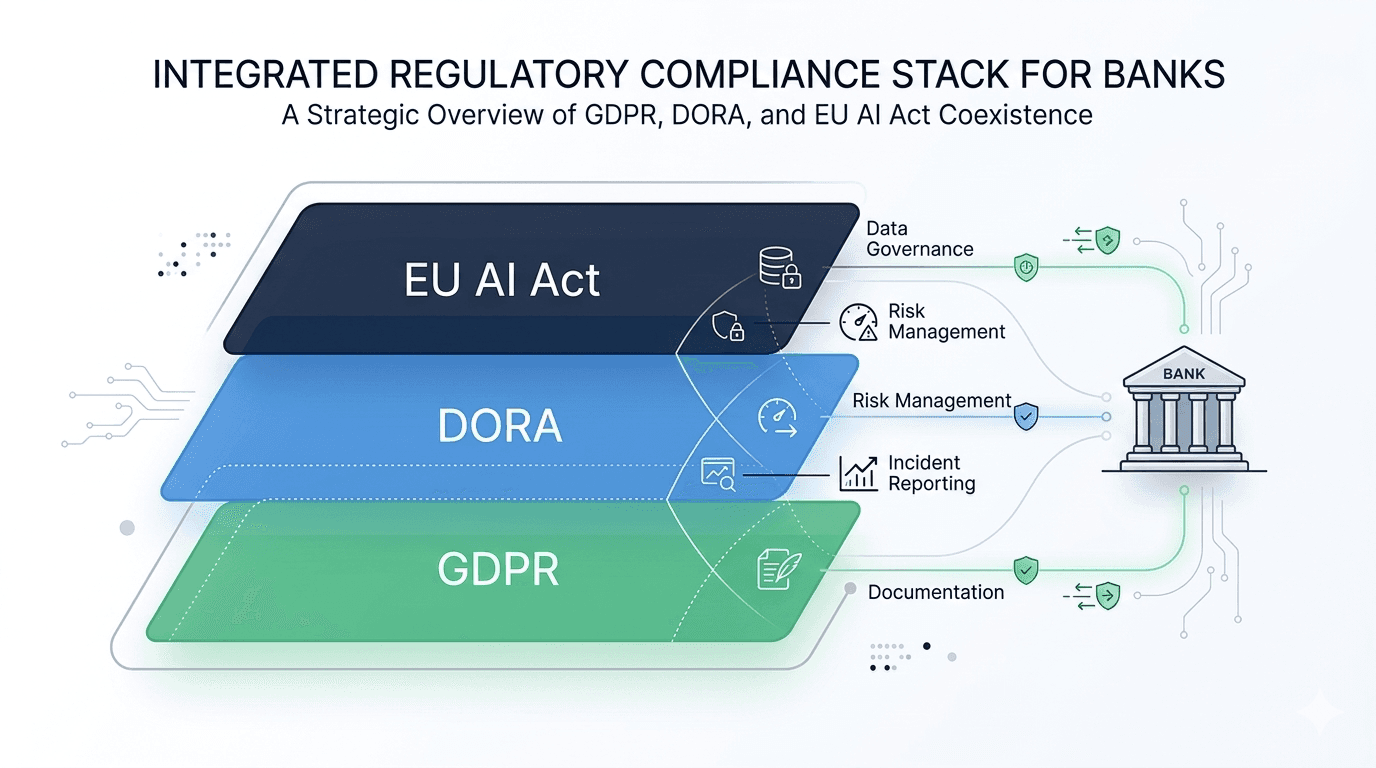

The Regulatory Stack: How the AI Act Interacts with GDPR and DORA

For financial institutions, the EU AI Act does not exist in isolation. It sits alongside the General Data Protection Regulation (GDPR) and the Digital Operational Resilience Act (DORA), creating what we call the "regulatory stack" for AI in European financial services.

These three regulations approach AI from different angles but converge on several key requirements:

· Risk management: all three demand formal frameworks for identifying, assessing, and mitigating risk. The AI Act focuses on fundamental rights and safety; DORA on operational and ICT resilience; GDPR on personal data protection.

· Data governance: the AI Act requires high-quality training datasets to minimise discriminatory outcomes. GDPR enforces accurate and up-to-date personal data. DORA mandates data integrity for operational resilience.

· Incident reporting: all three require monitoring, logging, and notification when serious issues occur. The specifics differ (AI malfunctions, personal data breaches, ICT incidents), but the underlying infrastructure overlaps.

· Transparency and documentation: the AI Act demands technical documentation and audit trails. GDPR requires records of processing activities. DORA requires detailed ICT risk documentation.

The European Commission recognised this overlap in its November 2025 Digital Omnibus proposal, which aims to simplify reporting across these frameworks by introducing a single incident reporting point and aligning breach notification thresholds. But until those simplifications take full effect, financial institutions must navigate all three regimes simultaneously.

The practical implication? If your organisation has already invested in GDPR compliance and DORA resilience frameworks, you are not starting from zero. Many of the controls, governance structures, and documentation practices required by the AI Act align with what you already have. The key is to map the overlaps, identify the gaps, and extend your existing frameworks rather than building a separate AI Act compliance programme from scratch. Understanding how data flows through modern organisations is a necessary foundation for that mapping exercise.

The Innovation Question: Burden or Opportunity?

The EU AI Act has not been without criticism. Over thirty founders and venture investors signed an open letter arguing the Act risks creating a regulatory environment that will "undermine innovation, discourage investment, and ultimately leave Europe behind." The Regulatory Review published an analysis in March 2026 highlighting the paradoxes: a regulation intended to promote trustworthy AI may simultaneously slow the development of AI in the region that wrote the rules.

These concerns are legitimate. Compliance costs are real, especially for SMEs. Unfinished technical standards create uncertainty. The provider vs deployer distinction is not always clear. And European AI startups are competing for capital against US and Asian rivals operating in less regulated markets.

But the picture is more nuanced than the "regulation kills innovation" narrative suggests. The AI Act includes provisions for regulatory sandboxes, allowing companies to test AI systems under regulatory supervision before full compliance is required. It offers reduced obligations for SMEs and startups in certain contexts. And it creates something that may prove more valuable than deregulation: market trust.

In financial services, trust is the foundation of everything. As we argued in the NeuroNomixer manifesto, the gap between AI capability and AI governance is one of the defining challenges of this decade. Organisations that can demonstrate compliance with the EU AI Act, that can show regulators, customers, and partners that their AI systems are transparent, auditable, and fair, will have a competitive advantage. The Act does not just constrain; it creates a framework for building AI that people can actually trust.

What Businesses Should Do Now

With four months until full enforcement, organisations should be taking concrete steps. Here is a practical starting point, informed by the Orrick compliance framework and adapted for the financial services context:

1. Inventory your AI systems. You cannot classify what you have not mapped. Over half of organisations still lack a systematic inventory of their AI systems. Start here.

2. Classify by risk tier. For each AI system, determine whether it falls under the Act's high-risk, limited-risk, or minimal-risk categories. In financial services, any system influencing credit decisions, fraud detection, or customer eligibility is likely high-risk.

3. Determine your role. Are you a provider (developing or placing the system on the market) or a deployer (using it)? If you customise vendor models, you may be both.

4. Assess compliance gaps. Compare your existing risk management, documentation, and governance frameworks against the Act's requirements. If you are already compliant with GDPR and DORA, you likely have significant overlap.

5. Address AI literacy. If you have not started training staff on AI literacy under Article 4, begin immediately. This obligation is already in force.

6. Engage legal and compliance early. The AI Act is complex, and the interaction with GDPR, DORA, and sector-specific regulation (CRD, MiFID, Solvency II) requires expert guidance.

Conclusion: Regulation as Foundation

The EU AI Act is not a distant policy experiment. It is a live regulatory framework with binding deadlines, significant penalties, and real implications for how organisations build and deploy AI. For financial services, where AI already drives decisions about credit, risk, and customer access, the stakes are especially high.

But the Act is also an opportunity. Organisations that embrace explainability, transparency, and robust governance will be better positioned, not just for compliance, but for the trust-based economy that is emerging around AI. Understanding the foundations of data, why explainable AI matters, and how the regulatory landscape is evolving are not separate concerns. They are facets of the same challenge: building AI systems that work for people, institutions, and society.

In upcoming posts, we will go deeper into how AI governance is reshaping tech in Europe, and how privacy regulation under GDPR shapes the way we build models. The regulatory journey is just beginning.

Continue Reading

· Why Explainable AI Matters More Than You Think

· Exploring How Machine Learning and Analytics Shape the Future

· What NeuroNomixer Stands For: AI, Data, and the Future of Systems