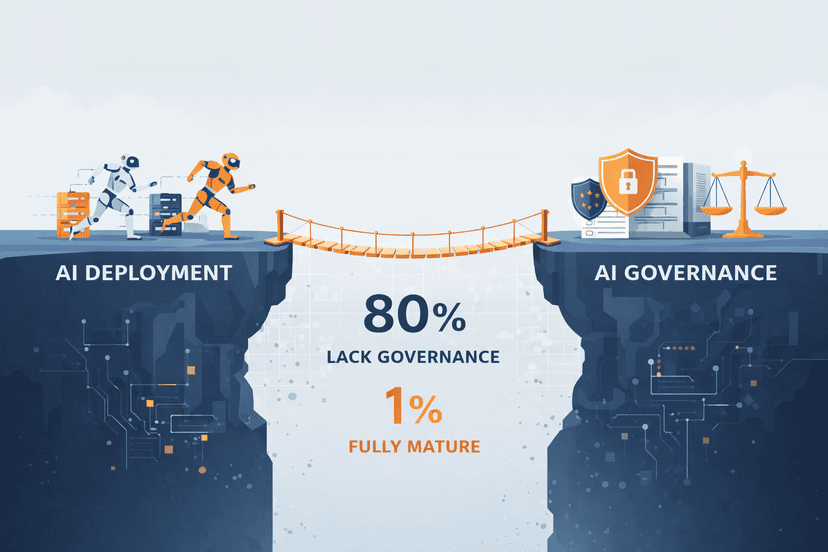

In 2026, artificial intelligence is everywhere, and almost nowhere is it understood well enough. Enterprises are deploying AI agents at record speed: Gartner predicts that 40% of enterprise applications will embed task-specific AI agents by the end of this year, up from less than 5% just twelve months ago. Yet according to McKinsey's State of AI Trust survey, only 1% of organisations consider themselves mature in AI governance. The gap between what we are building and what we understand about what we are building has never been wider.

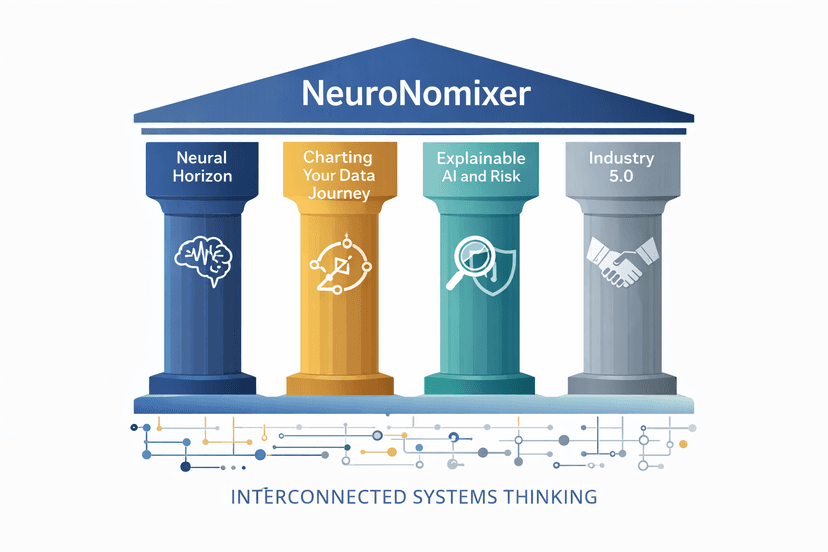

This is the space NeuroNomixer was created to occupy. Not another AI hype channel, and not a dry academic journal, but a platform that treats AI, data, finance, regulation, and education as parts of the same interconnected system. Because they are.

What You'll Learn in This Post

· Why the current AI landscape demands a platform that thinks across boundaries

· The four content pillars that define NeuroNomixer's editorial mission

· How the governance gap in AI agent deployment shapes our perspective

· Where NeuroNomixer fits in the broader ecosystem of AI and data publications

· What to expect from our 30-post content roadmap, and why it matters for your learning

The Interconnection Thesis: Why Silos Fail

Most AI content today exists in silos. Technical blogs explain model architectures. Regulatory publications discuss compliance frameworks. Career sites list tools to learn. Finance publications cover fintech trends. These are all useful, but they are incomplete on their own.

The reality is that these domains do not operate in isolation. When a bank deploys a machine learning model for credit scoring, it sits at the intersection of data engineering, statistical modelling, regulatory compliance (Basel III, GDPR, EBA guidelines), explainability requirements, ethical considerations, and workforce capability. If you understand only one of these dimensions, you are missing the picture.

NeuroNomixer's founding thesis is simple: the systems shaping our world are interconnected, and they must be understood together. This is not an academic observation; it is a practical necessity. Organisations that treat AI strategy, data governance, and regulatory compliance as separate workstreams are the ones Gartner expects to cancel over 40% of their agentic AI projects by 2027 due to escalating costs and unclear business value.

If you have read our earlier exploration of how machine learning and analytics are shaping the future of finance, you will recognise this interconnected lens. That post examined how regulation, explainability, and statistical heritage converge in banking. This manifesto explains why that perspective defines everything we publish.

The Governance Gap: Why This Matters Now

Let us put a number on the problem. Analysis of Gartner and IDC data reveals that four out of five enterprises deploying AI agents lack mature governance infrastructure. That is not a technical oversight; it is a structural failure. Organisations are racing to deploy autonomous systems without the frameworks to ensure those systems are transparent, accountable, and aligned with regulatory expectations.

This governance gap is particularly acute in regulated industries. The EU AI Act, with prohibited practices already in force since February 2025 and high-risk system requirements rolling out in August 2026, creates binding obligations that many organisations are not yet equipped to meet. In financial services, DORA (the Digital Operational Resilience Act) has been fully applicable since January 2025, requiring ICT risk management, incident reporting, resilience testing, and third-party oversight across more than twenty categories of financial entities.

At the same time, the CFA Institute published landmark guidance on explainable AI in finance, showing how methods like SHAP can bridge the gap between high-performance ensemble models and the regulatory demand for interpretability under Basel III. The technical solutions exist, but the bridge between technical capability and governance readiness is still being built.

NeuroNomixer exists to help build that bridge. We believe that governance is not a constraint on innovation; it is a precondition for trust. And in regulated industries, trust is the only currency that matters.

NeuroNomixer's Four Pillars

Everything we publish falls within four interconnected categories. Together, they form a coherent framework for understanding the forces reshaping the digital and financial landscape.

1. The Neural Horizon: AI and Technology Analysis

This is where we cover the big picture: major AI developments, the companies and policies shaping the industry, and the trends that will define the next phase of digital transformation. We cut through the noise to identify signal, the developments that will actually change how we work, build, and regulate technology. From the EU AI Act to open banking, from agentic AI adoption to the Nordic innovation ecosystem, The Neural Horizon is our lens on what is happening and what it means.

2. Charting Your Data Journey: Education and Career Paths

Data literacy is no longer optional. Recent surveys show that 88% of enterprise leaders consider basic data literacy essential, with 72% holding the same standard for AI literacy. Our educational content does not just list tools to learn; it maps skills to roles, connects technical competence to industry context, and helps readers build a career with intention. If you have explored our modern data career map or our guides to learning SQL and Excel, you know we take the practical side seriously.

3. Explainable AI, Risk and Responsible Systems

This is perhaps our most distinctive pillar. While other publications celebrate AI capabilities, we ask: Can you explain it? Can you audit it? Can you trust it? In credit risk, healthcare, and public-sector decision-making, the answers to these questions are not optional; they are legal requirements. We cover explainability methods, model risk management, bias and fairness, and the regulatory landscape that governs high-stakes AI. This is where our finance and risk analytics background gives NeuroNomixer a voice that most AI publications simply do not have.

4. Industry 5.0 and Future Systems

The European Commission defines Industry 5.0 through three pillars: human-centricity, sustainability, and resilience. It represents a shift from pure automation to systems that serve people and societies. This is almost entirely absent from the mainstream AI conversation, and that is exactly why we cover it. At NeuroNomixer, we believe the future of technology is not just about what machines can do, but about what kind of world they help us build.

What Makes This Platform Different

The AI and data content ecosystem is crowded. So why does NeuroNomixer need to exist?

We examined the most prominent publications in this space, from large platforms like Towards Data Science and Analytics Vidhya to specialised outlets covering fintech, regulation, and data careers. The pattern is consistent: almost no publication combines AI analysis, regulated industry depth, educational pathways, and governance under a single editorial framework.

Most manifesto or "about" pages on competitor sites are short (300 to 800 words), lack specific citations, reference no current data or regulation, and do not provide a concrete content roadmap. They promise to "cut through the noise" without explaining how.

NeuroNomixer is different because:

· Grounded in data: Every claim is evidence-backed. We cite specific data, name specific regulations, and link to primary sources.

· Finance and risk perspective: We write from inside the regulated world (credit risk, banking compliance, financial modelling), not from outside looking in.

· Interconnected thinking: Our content explicitly connects skills to roles, tools to industries, and regulations to real business decisions.

The Road Ahead: Our 30-Post Content Plan

NeuroNomixer already has eight published articles covering data-driven decision-making, the data ecosystem, foundational tools for data careers, and more. This is not a launch; it is a continuation.

Over the coming months, we will publish thirty new posts spanning all four pillars. Here is what is ahead:

· Regulation and governance: Deep dives into the EU AI Act, GDPR, and how regulation is reshaping AI in Europe

· Explainability and risk: Explainable AI in credit risk, model risk management, and the case for simplicity in high-stakes systems

· Education and careers: From SQL and Python roadmaps to data governance and what employers actually expect from entry-level data talent

· Future systems: Industry 5.0, resilient systems, and the Nordic AI ecosystem

· Signal over noise: Regular frontier reports tracking the AI developments that actually matter, not the ones that generate the most headlines

Each post will be research-backed and written to serve readers who want substance over spectacle. Whether you are a data analyst building your first dashboard, a risk professional navigating new regulations, a data scientist building RAGs, or a curious mind trying to understand where AI is actually heading, there will be something here for you.

Why This Matters, and What Comes Next

The world does not need another blog that explains what a neural network is. It needs a platform that asks: Who audits the neural network? What happens when it is wrong? Who benefits, and who bears the risk?

That is what NeuroNomixer is for. We exist at the intersection of technology and accountability, education and industry, innovation and regulation. We believe the future belongs to people who can think across these boundaries, and we are here to help you become one of them.

If this resonates with you, start exploring. Our next post dives into why explainable AI matters more than you think, the regulatory, ethical, and technical case for building AI systems that can explain themselves. It is one of the most important conversations in technology today, and it is at the heart of everything we do here.