Every quarter, the AI industry generates more announcements than most people can process: new models, record funding rounds, regulatory deadlines, and bold claims about what comes next. Most of it is noise. The challenge, particularly for anyone building, regulating, or investing in systems that handle real money and real risk, is knowing which signals will still matter in six months. This edition of Signal from the Frontier filters the Q2 2026 landscape through that lens: what is genuinely shifting the ground beneath AI in finance, governance, and data infrastructure, and what can you safely ignore.

What You Will Learn

• Why agentic AI has moved from prototype to production in banking, and the governance gap that comes with it

• How the EU AI Act's August 2026 enforcement deadline is reshaping compliance strategy across financial services

• What $300 billion in Q1 2026 venture funding reveals about concentration risk in the AI supply chain

• Where explainability is becoming a competitive advantage, not just a regulatory obligation

• How open banking deadlines and AI agent autonomy are creating a new kind of systemic risk

Agentic AI in Finance: From Demos to Deployment

If 2025 was the year everyone talked about AI agents, 2026 is the year they arrived in production. According to Gartner's latest projections, 40 percent of enterprise applications will incorporate task-specific AI agents by the end of this year, up from less than five percent in 2025. In financial services, the shift is already visible. Goldman Sachs is developing autonomous agents for trade accounting and client onboarding. Lloyds Banking Group expects agentic AI to add over 100 million pounds in value during 2026. A Singaporean institution combined NLP with anomaly detection to achieve a 40 percent reduction in transaction-monitoring false positives.

These are not chatbot upgrades. Agentic systems make decisions, execute workflows, and coordinate with other agents autonomously. The operational benefits are clear: banks using AI-driven fraud detection report saving over five million dollars in attempted fraud within the past two years. But the governance implications are just as significant. A McKinsey report on AI trust found that 80 percent of organisations have already encountered risky agent behaviours, including improper data exposure and unauthorised access, yet only 20 percent have robust security measures in place.

The risk is not theoretical. Simulation studies show that a single compromised agent can poison 87 percent of downstream decisions within four hours. For institutions subject to SR 11-7 model risk guidance or the EU AI Act's high-risk requirements, this creates an urgent need for agent-level oversight frameworks that most teams have not yet built. If you want to understand the regulatory scaffolding taking shape around these systems, our deep dive into the EU AI Act's practical impact on business covers the compliance timeline and penalty structure in detail.

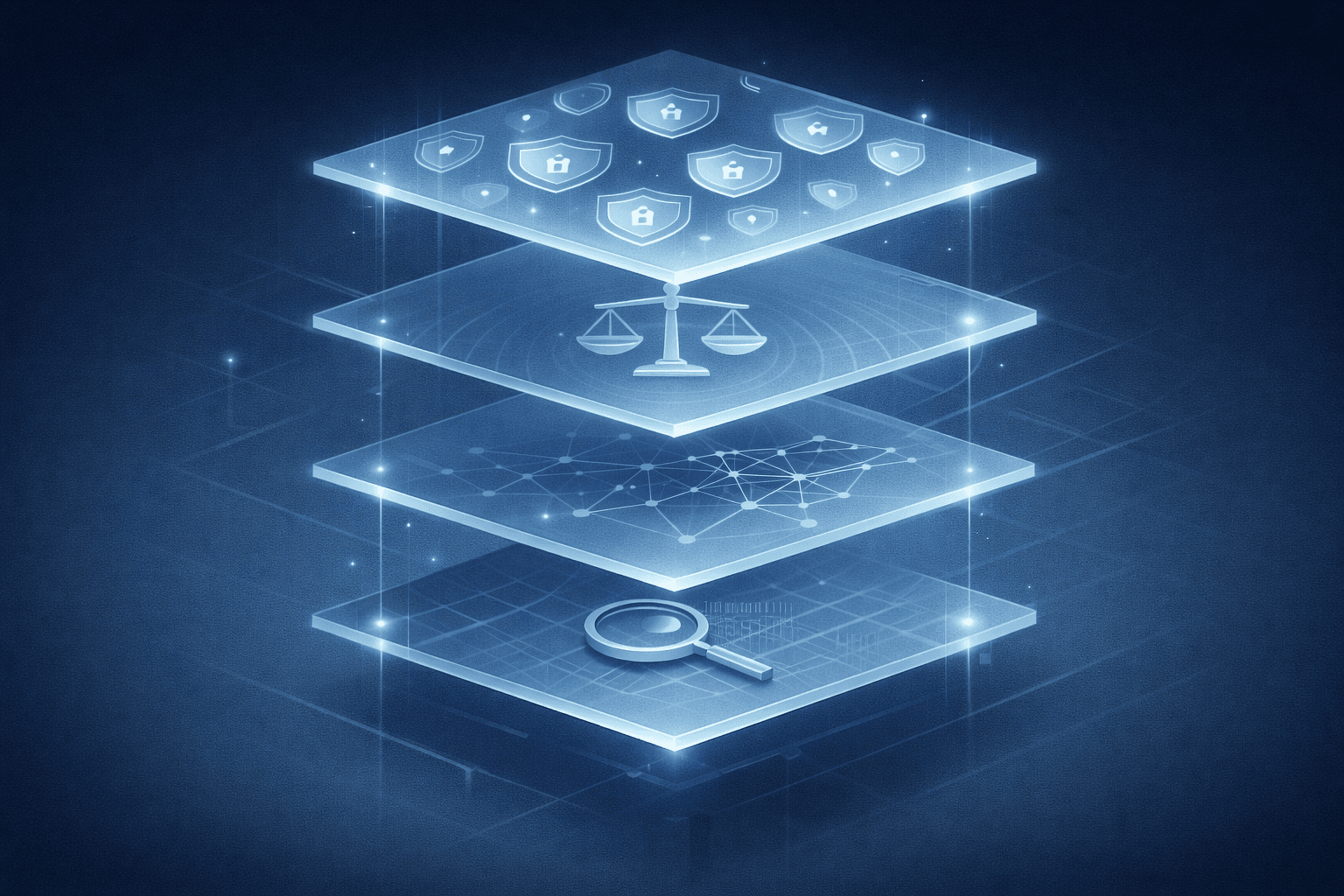

The Regulatory Convergence: EU AI Act, DORA, and the Compliance Stack

August 2, 2026 is a date every AI team in financial services should have circled. That is when the EU AI Act's core provisions become fully enforceable for high-risk AI systems, including those used in credit scoring, fraud detection, and insurance underwriting. The requirements are substantial: mandatory risk management systems, data governance protocols, technical documentation, transparency obligations under Article 50, and continuous human oversight. Penalties reach up to 35 million euros or seven percent of global turnover.

But the AI Act does not operate in isolation. Financial institutions must now navigate what practitioners are calling the "regulatory stack": the EU AI Act layered on top of GDPR, the Digital Operational Resilience Act (DORA), and sector-specific supervision from bodies like the ECB and EBA. In the US, the picture is no simpler: SR 11-7, GLBA, PCI DSS, NYDFS Part 500, and new SEC and FINRA 2026 oversight priorities all apply simultaneously with different evidentiary standards.

The practical challenge is not understanding any single regulation; it is managing their overlap. A credit-scoring model might need to satisfy GDPR's right to explanation, the AI Act's transparency requirements, DORA's operational resilience standards, and SR 11-7's model validation expectations, all at once. Organisations that treat these as separate compliance projects will burn resources. Those that build integrated governance frameworks will turn compliance into a competitive advantage.

Meanwhile, a Code of Practice on AI-generated content labelling is expected by June 2026, adding another layer for any institution using generative AI in customer communications. For a broader look at why explainability sits at the heart of these requirements, see our analysis of what regulators actually demand and why black-box models are becoming indefensible.

The $300 Billion Quarter: What AI Funding Reveals About Concentration Risk

Q1 2026 shattered every venture capital record. According to Crunchbase, $300 billion was invested across 6,000 startups globally, with $242 billion (80 percent of the total) going to AI companies. Four of the five largest private funding rounds in history occurred in a single quarter: OpenAI at $110 billion, Anthropic at $30 billion, xAI at $20 billion, and Waymo at $16 billion.

The headline narrative is about growth, but the structural story is about concentration. The frontier AI market is consolidating around a small number of providers. For financial institutions, this creates a dependency risk that most technology assessments do not capture. When three or four labs control the most capable models, every bank and insurer building on those models inherits a single point of failure. A service disruption, a licensing change, or a regulatory action against one provider could cascade across the sector.

The M&A landscape reinforces this trend. Deloitte's analysis of AI acquisition patterns shows companies deployed $157 billion across over 33 acquisitions in data, cloud, and AI infrastructure in 2025. OpenAI alone acquired Windsurf for $3 billion and Jony Ive's io Products for $6.5 billion. The competitive dynamics are shifting from "who has the best model" to "who controls the infrastructure."

For risk professionals, this concentration should be treated with the same seriousness as any vendor concentration assessment. The Stanford research on AI and systemic financial risk identifies five categories of systemic risk that AI could amplify: liquidity mismatches, common exposures, interconnectedness, lack of substitutability, and leverage. Model provider concentration maps directly onto the "common exposures" and "lack of substitutability" categories. If your institution depends on a single frontier lab, this is a risk that belongs on the board's agenda.

Explainability as Strategic Advantage, Not Just Compliance Cost

The AI model risk management market reached $7.17 billion in 2025 and is projected to grow to $8.33 billion in 2026, a 16 percent year-on-year increase. That growth reflects a fundamental shift: explainability is no longer a box-ticking exercise. It is becoming the foundation of regulatory trust, customer confidence, and operational resilience.

The regulatory drivers are converging from multiple directions. The EU AI Act requires transparency for all high-risk systems. US regulators are sharpening their focus: the SEC's 2026 examination priorities now explicitly address AI risk, and FINRA's oversight report elevates generative AI and cyber-enabled fraud as priority concerns. In practice, this means every credit decision, every fraud flag, and every AML alert generated by an AI system may need to be explained to regulators, auditors, and customers.

The institutions that are ahead of this curve are not treating explainability as a constraint. They are using it to build what might be called a compliance moat: the ability to deploy AI confidently because their governance, documentation, and interpretability standards exceed regulatory minimums. This advantage compounds over time. A firm that can demonstrate model transparency to regulators earns faster approvals, fewer enforcement actions, and greater freedom to innovate. We explored the practical techniques behind this approach, from SHAP and LIME to explainable boosting machines, in our guide to interpretable versus black-box models and the trade-offs that define modern AI.

Open Banking Meets Agentic AI: The Emerging Systemic Risk Vector

In the US, the Personal Financial Data Rights Rule requires large banks to make consumer-authorised financial data (transaction histories, balances, account terms) available to third parties through standardised APIs by April 2026. The European open banking ecosystem, already more mature, continues to expand. These developments are valuable for consumers and competition. They also create a new attack surface.

Combine open banking APIs with agentic AI, and you get autonomous systems that can monitor accounts across multiple institutions, move funds between providers, negotiate loan terms, and execute financial decisions in real time. The promise is hyper-personalised financial management; the risk is a deeply interconnected financial graph where an agent error or a compromised API can propagate at machine speed. This is the intersection that almost no analyst coverage addresses: open banking standardisation amplifying the systemic interconnectedness that Stanford's research identifies as a key crisis vector.

For a broader view of how data flows through financial organisations and why governance must be embedded at every stage, our guide to the data lifecycle from collection to retirement provides a practical framework.

The Model Landscape: What Actually Changed

Beneath the funding headlines, the model landscape in Q2 2026 reflects a maturing market. GPT-5.4 introduced a one-million-token context window and the ability to execute multi-step workflows autonomously, pushing OpenAI's positioning from "chat tool" to "digital coworker." Anthropic released Claude Mythos. Google's Gemini 3.1 Pro leads in 13 of 16 public benchmarks. Meta's Llama 4 made open-source models genuinely competitive with proprietary alternatives. DeepSeek V4 is expected before the end of Q2.

The more interesting trend is not any single model release but the shift in how capability is being deployed. MIT Technology Review's analysis highlights that brute-force scaling is yielding diminishing returns. Innovation is moving to post-training techniques, model orchestration, and smaller, fine-tuned models designed for specific enterprise tasks. For financial institutions, this means the competitive question is no longer "which model is most powerful" but "which system, combining models, tools, and governance, delivers reliable outcomes in regulated environments."

This is directly relevant to the accuracy-versus-interpretability trade-off we have explored before. A frontier model with a trillion parameters may score highest on benchmarks, but a well-tuned, explainable system that satisfies regulatory requirements delivers more value in a credit-risk or compliance setting.

AI Safety and Governance: The Institutional Response

The International AI Safety Report 2026, led by Turing Award winner Yoshua Bengio and authored by over 100 experts from more than 30 countries, represents the largest global collaboration on AI safety to date. Its recommendations are shaping national frameworks across Europe, North America, and Asia.

At the corporate level, 12 frontier AI companies published or updated safety frameworks in 2025, establishing what amounts to an emerging industry standard for responsible development. The NIST AI Risk Management Framework and ISO 42001 are becoming the governance baseline for organisations that want to demonstrate responsible AI deployment. In February 2026, the US Treasury released a dedicated Financial Services AI Risk Management Framework, signalling that sector-specific governance is no longer optional.

The Allianz Risk Barometer 2026 underscores the broader mood: AI rose to the number two global business concern (32 percent), up from number ten in 2025, the largest single-year jump in the barometer's history. That shift reflects a maturation: organisations are moving past fascination with capability and starting to grapple with operational risk, cascading errors, and accountability gaps.

What This Means: Separating Signal from Noise

Not every AI headline in Q2 2026 carries equal weight. Here is how the developments stack up through the lens of finance, risk, and governance:

| Development | Signal Strength | Finance Impact | Action Required |

| Agentic AI in production | Very High | Direct operational and risk impact | Build agent oversight frameworks now |

| EU AI Act enforcement (Aug 2026) | Very High | Compliance, penalties, competitive positioning | Audit high-risk systems; start documentation |

| Model provider consolidation | High | Vendor dependency, systemic risk | Conduct vendor concentration risk assessment |

| Explainability market growth | High | Competitive moat opportunity | Invest in interpretable systems early |

| Open banking + agent convergence | Medium-High | New systemic risk vector | Map API dependencies; stress-test agent chains |

| New model releases (GPT-5.4, etc.) | Medium | Capability gains, diminishing marginal returns | Evaluate system-level value, not benchmarks |

| Record VC funding ($300B) | Medium | Market signal, not direct operational impact | Monitor for consolidation effects |

| International AI Safety Report | Medium | Long-term framework influence | Track for regulatory translation |

Conclusion: Governance Is the New Competitive Edge

The Q2 2026 AI landscape is defined by a paradox. The technology is more capable than ever: agents that execute autonomously, models that process a million tokens, systems that detect fraud patterns humans cannot see. But capability without governance is a liability. The institutions that will lead are not those deploying the most advanced models; they are those building the systems, frameworks, and cultures that make advanced deployment safe, explainable, and defensible.

Three priorities emerge for anyone working at the intersection of AI and finance. First, treat agentic AI governance as an operational necessity, not a compliance afterthought. The gap between deployment speed and oversight readiness is the single biggest risk in financial AI today. Second, build for the regulatory stack. The EU AI Act, GDPR, DORA, and sector-specific supervision are converging, and integrated governance frameworks will save far more than piecemeal compliance projects. Third, assess model provider concentration as a systemic risk. The frontier AI market's consolidation creates dependencies that deserve the same scrutiny as any critical vendor relationship.

At NeuroNomixer, we track these developments because they sit at the intersection of technology, finance, risk, and governance: the space where the most important decisions about our digital future are being made. If you are building in this space, we are writing for you.

Continue Reading

• The EU AI Act in 2026: What It Actually Means for Business and Innovation : a complete guide to the risk-based framework, enforcement timeline, and penalty structure.

• Why Explainable AI Matters More Than You Think : the case for transparency in high-stakes AI, from regulatory mandates to the accuracy-interpretability myth.

• Interpretable vs Black-Box Models: The Trade-Off That Defines Modern AI : a practical framework for choosing the right model transparency level for your use case.