On 22 April 2026, the Financial Conduct Authority announced eight firms selected for its second AI Live Testing cohort. The move represents a significant maturation: from exploratory deployments (Cohort 1) to production-grade systems running under supervised conditions. More importantly, the composition of the cohort reveals the FCA's confidence in which AI use cases are ready for permanent rules, and which remain frontier territory. This is not just news; it is a regulatory roadmap.

What You Will Learn

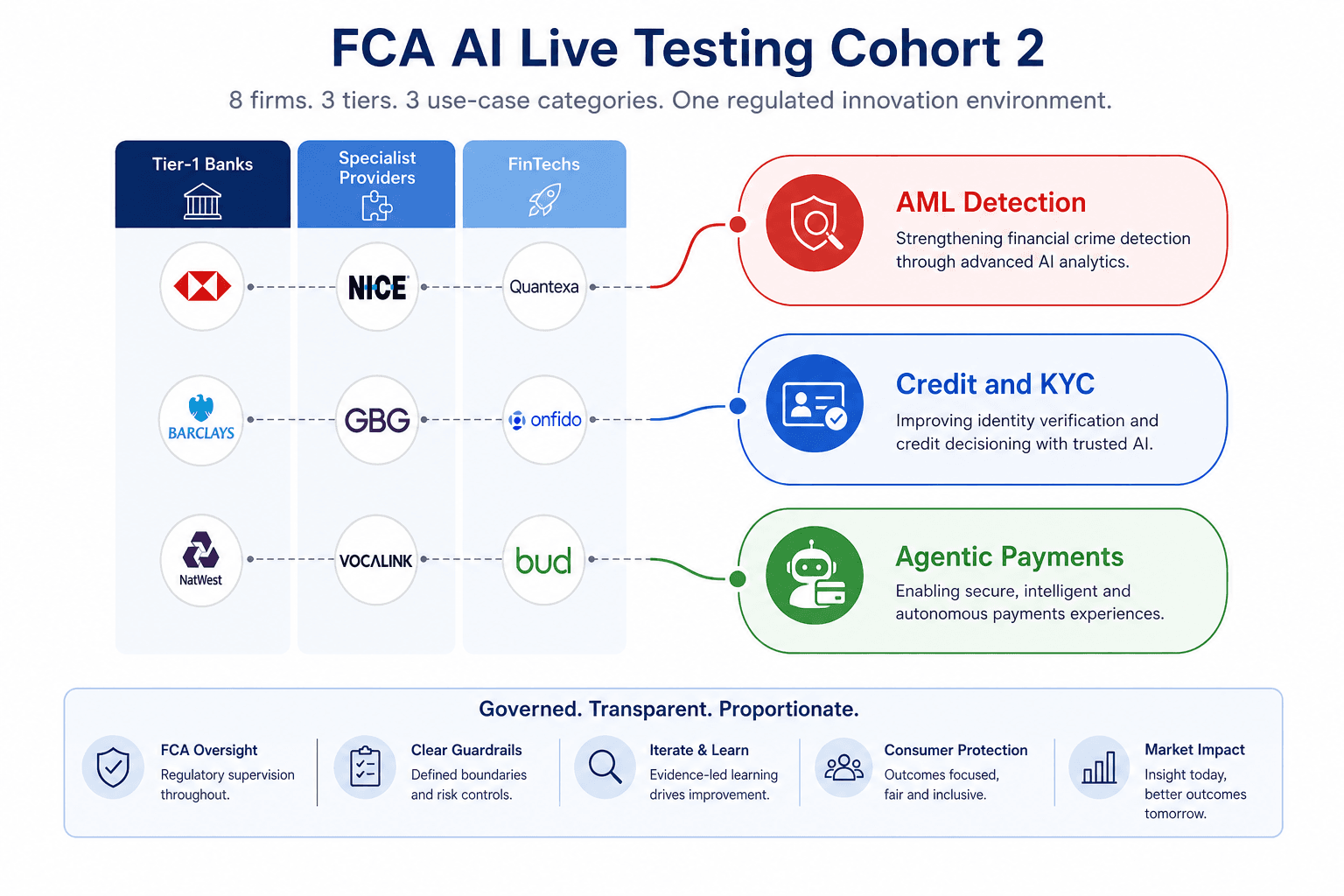

• Why the use-case concentration (4 AML firms, 4 credit/KYC firms, 2 agentic payment firms) signals the FCA's regulatory roadmap for 2026-2027

• Which AI applications the FCA now deems mature enough for autonomous deployment and which still require human oversight

• The three-tier firm structure (Tier-1 banks, specialist providers, FinTechs) and what their participation signals about governance confidence

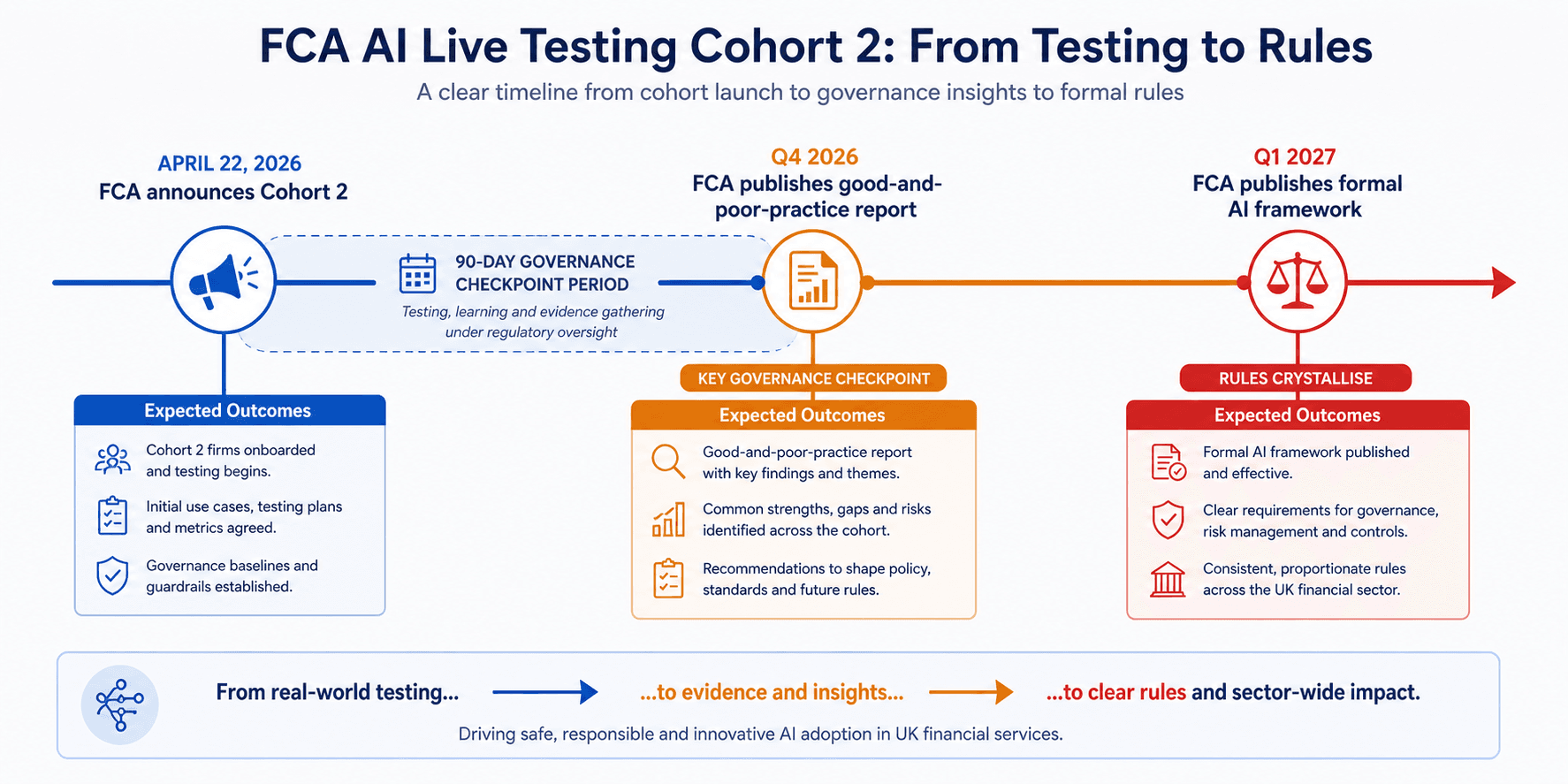

• Why banks not in the cohort have 6-9 months to establish AI governance baselines before the FCA's Q4 2026 good-practice report and Q1 2027 formal evaluation

• How to read the cohort as a transparency window into which AI rules will crystallise first, and in what order

What the FCA AI Live Testing Cohort 2 Just Announced

The eight selected firms span three categories: Barclays, Lloyds Banking Group (including Scottish Widows), and UBS represent tier-1 banking infrastructure. Experian and Aereve bring specialist credit and KYC capabilities. GoCardless and Palindrome are testing agentic payment systems in real-time flows. Coadjute, focused on financial crime compliance, rounds out the cohort with deep AML and sanctions screening expertise.

The FCA's technical partner, Advai, will instrument governance checkpoints at 30, 60, and 90 days, monitoring for data drift, fairness bias, and performance degradation in real time. This is not a loose sandbox; it is production-grade deployment under active regulatory supervision. The governance framework is mature and purposeful, designed to catch failures early and generate evidence for permanent rules.

Reading the Use-Case Signals: What the Concentration Tells You

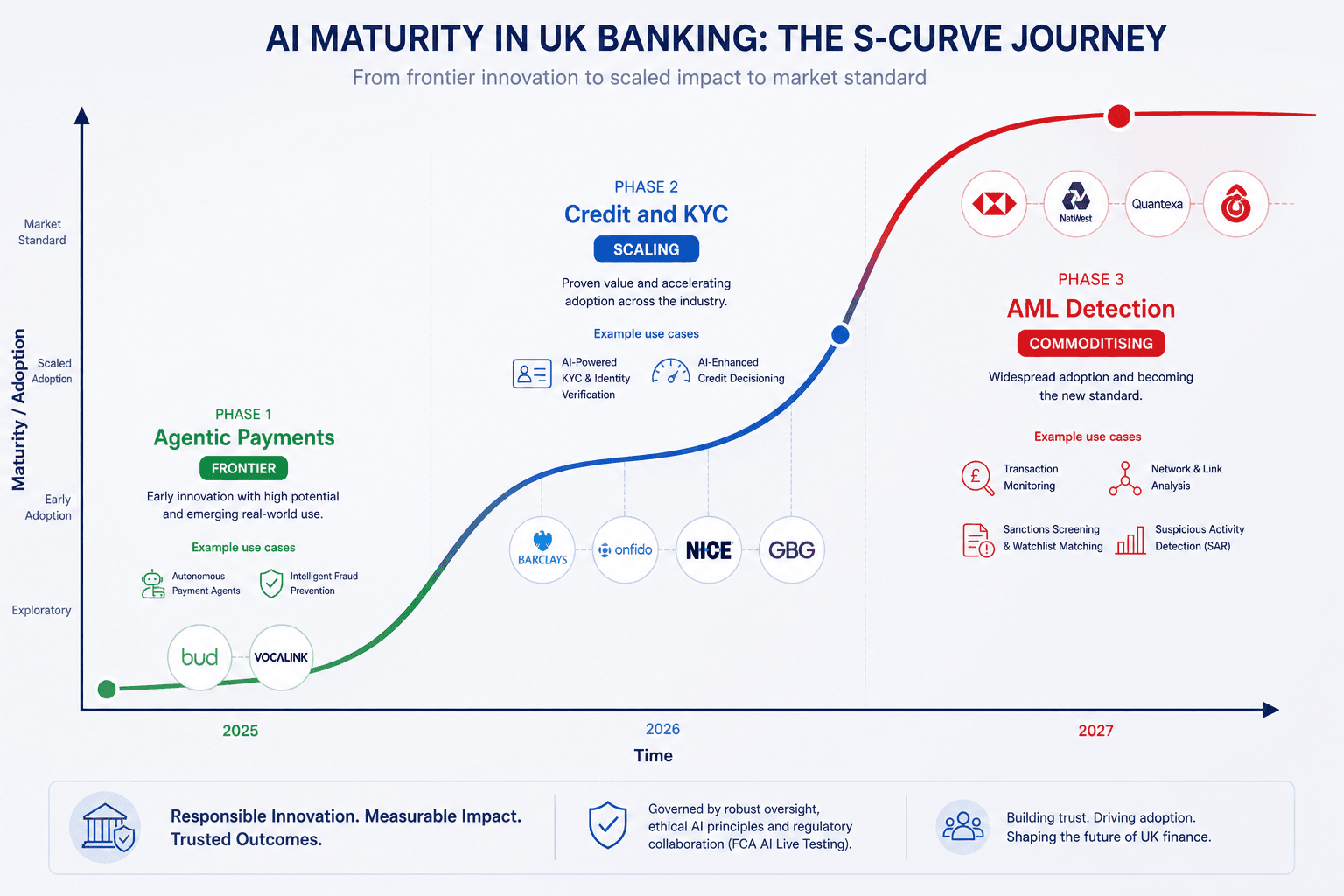

The FCA AI Live Testing cohort distribution is not random. It is a regulatory roadmap. The cohort's composition signals which AI use cases are ready for scaled deployment, which are still maturing, and which remain frontier territory. Understanding this hierarchy is critical for risk teams, compliance officers, and AI engineers in regulated banking.

Four firms are testing AML detection: Barclays, UBS, Coadjute, and Palindrome. This concentration signals the FCA's highest confidence in the maturity of machine-learning-driven transaction monitoring. For tier-1 banks, AML is high-volume, real-time, and critical to regulatory standing. The fact that both Barclays and UBS are testing autonomous pattern recognition suggests the FCA believes AML teams now have the governance infrastructure to let models flag transactions without pre-approval of every alert. This was not true two years ago. The inclusion of Coadjute and Palindrome indicates specialist providers can now compete in autonomous financial crime detection at scale.

Four firms are testing credit scoring and KYC: Barclays, Lloyds, Experian, and Aereve. Credit decisioning and customer authentication are showing commoditisation. The presence of Experian and Aereve signals that smaller firms and specialist providers can now compete on data quality and model performance, not on compliance complexity. The market is democratizing. Scottish Widows' investment recommendation algorithms point to insurance and wealth management catching up to retail banking in AI adoption. The FCA's confidence in credit use cases suggests these rules will follow AML but precede agentic systems in the regulatory timeline.

Two firms are testing agentic payment systems: GoCardless and Palindrome. This is the frontier. Agentic payments involve autonomous decision-making in transaction execution, fraud prevention, and payment routing without human approval loops. The smaller cohort size reflects genuine regulatory caution. The FCA is signalling that while these systems can work, they require guardrails: human review loops, transaction limits, real-time reporting, and explainability. For firms building agentic payment infrastructure outside the cohort, this signals a 6-9 month runway before governance norms crystallise into permanent rules. Getting the architecture right now is a competitive advantage.

What This Means for Banks Not in the Cohort

If your bank was not selected for Cohort 2, the next 6-9 months are when you establish your AI governance baseline. The regulatory window is open, but it is closing. The firms in the cohort are generating the evidence the FCA will use to write permanent rules, and that evidence will become the de facto standard for the industry.

The good news: the cohort firms are testing in real time and, if successful, will unlock deployment permission for everyone. Their governance success is your governance blueprint. The bad news: the FCA will publish its good-and-poor-practice report in late Q3 or Q4 2026 based on what succeeded, what failed, and what governance checks actually caught problems. That report will become the de facto standard for regulated AI deployment in UK banking.

For risk teams: start building algorithmic impact assessments now. The FCA AI Approach hints at mandatory assessments in 2027. For AML teams: document your data pipelines, model validation frameworks, and drift monitoring infrastructure. AML rules will come first, and they will be prescriptive. For investment and credit teams: audit your data lineage and fairness testing now; credit scoring rules will follow AML but precede agentic systems. For product teams building agentic payments: establish human-in-loop workflows and transaction limits before rules demand them. Being ahead of the curve is a governance strength.

The Regulatory Calendar Ahead

Two milestones will shape UK banking AI governance in 2026 and early 2027. Understanding the timeline is essential for planning AI deployment and governance investment.

Late Q3 or Q4 2026: The FCA will publish its good-and-poor-practice report based on Cohort 2 at the 90-day governance checkpoint. This report will detail which use cases showed drift or fairness issues, which governance checkpoints caught problems (and which were redundant), and emerging rules for autonomous decision-making thresholds. It will establish expectations for model explainability in AML, credit, and KYC. This is the regulator's first public window into which AI models can fail and how to detect it. Banks not in the cohort will use this report to validate their governance frameworks.

Q1 2027: The FCA publishes its formal AI framework for regulated financial services. Expected outcomes include prescriptive rules on when agentic systems require human sign-off, model governance roadmaps for tier-1 and tier-2 firms, timelines for mandatory algorithmic impact assessments, and likely carve-outs for black-box models in high-impact use cases (credit and AML). Banks that have not yet established governance will face sharp competitive friction and potential enforcement risk. Those with governance baselines in place will have first-mover advantage in interpretation and deployment.

Why This Matters: UK vs. EU Regulation

The FCA's approach is sandbox-first: permissioning firms to test production-grade AI under supervision, then publishing rules based on what worked. The EU AI Act is rules-first: setting rigid compliance categories upfront, then waiting for implementation and enforcement. The UK cohort demonstrates faster AI deployment permission with evidence-based outcomes. Banks choosing whether to roll out AI in London or Frankfurt should read the Cohort 2 selection as a signal that UK regulatory maturity for AI is accelerating relative to the EU's slower, more prescriptive path.

For more context on how this compares to the EU approach, see our earlier analysis: The EU AI Act in 2026: What It Actually Means for Business and Innovation

The Bottom Line

Eight firms in the FCA AI Live Testing Cohort 2 are not just testing models; they are writing the regulatory playbook. The use-case concentration tells you which AI applications are becoming commodity (AML detection), which are scaling (credit scoring and KYC), and which are still frontier territory (agentic payments). If your bank was not selected, now is when you establish your governance baseline. The rules are coming, and they will be written from the evidence these eight firms generate over the next 90 days. Start now.

Continue Reading

1. The EU AI Act in 2026: What It Actually Means for Business and Innovation

2. Regulation Meets Innovation: How AI Governance Is Reshaping Tech in Europe

3. Explainable AI in Credit Risk: Why Banks Cannot Afford Black Boxes